Sanctuary AI Demonstrates Zero-Shot In-Hand Manipulation

Hannover Messe is one of the world’s largest industrial tradeshows, showcasing innovations in manufacturing, automation, energy, and digitalization. Sanctuary AI had the pleasure of accompanying Microsoft to Messe 2025 …

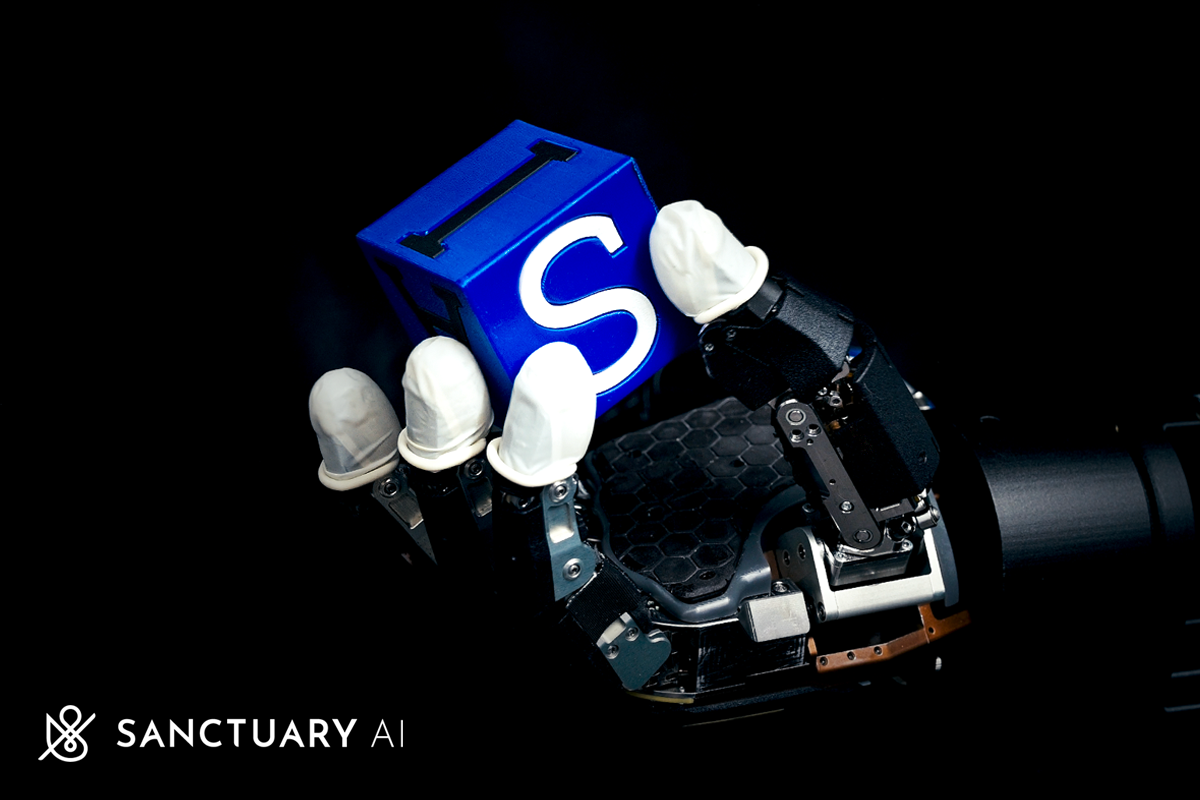

Sanctuary AI has demonstrated advanced manipulation skills that showcase our industry-leading ability to train dexterous policies for our unique, high-performance hydraulic hands…

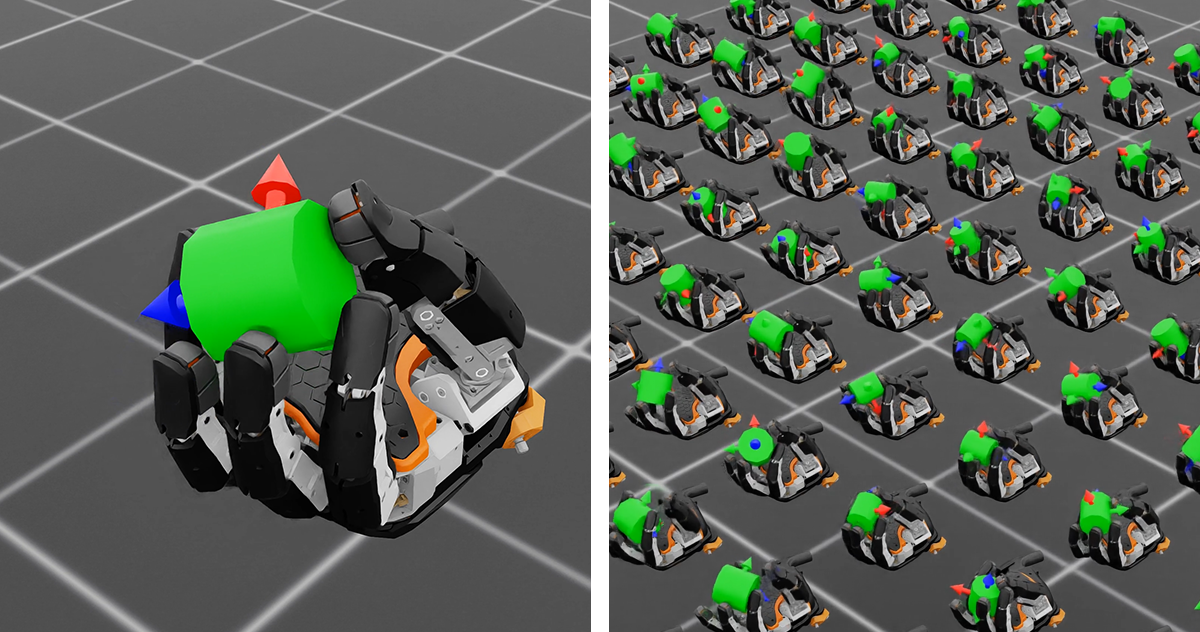

Sanctuary AI has demonstrated industry-leading sim-to-real transfer of learned dexterous manipulation policies for our unique, high degree-of-freedom, high strength, and high speed hydraulic hands…

Read the latest news about Sanctuary AI

In their latest video, their proprietary hydraulic hand autonomously manipulates a lettered cube, continuously reorienting…

Hannover Messe is one of the world’s largest industrial tradeshows, showcasing innovations in manufacturing, automation, energy, and digitalization. Sanctuary AI had the pleasure of accompanying Microsoft to Messe 2025 …

Sanctuary AI has demonstrated advanced manipulation skills that showcase our industry-leading ability to train dexterous policies for our unique, high-performance hydraulic hands…

Sanctuary AI has demonstrated industry-leading sim-to-real transfer of learned dexterous manipulation policies for our unique, high degree-of-freedom, high strength, and high speed hydraulic hands…

Today Sanctuary AI, a company developing physical AI for general purpose robots, announced the integration of new tactile sensor technology …

Sanctuary AI has been recognized by Morgan Stanley’s Research division as a leader in humanoid robotics intellectual property, ranking third…

Sanctuary AI unveils a new generation of tactile sensor technology optimized to improve dexterous manipulation…

Sanctuary AI presents the eighth generation of its Phoenix general purpose AI humanoid robots. This new…

Sanctuary AI has achieved a technological milestone on its path to mission success. The company’s 21 degrees…

Sanctuary ranks fourth globally for patent holdings for general purpose robotics and dexterous…